已经卡了好几天了,心累。

版本:

kubesphere 2.1.1

k8s 1.17.3

helm 2.16.2

最小化安装成功

**************************************************

#####################################################

### Welcome to KubeSphere! ###

#####################################################

Console: http://172.31.47.250:30880

Account: admin

Password: P@88w0rd

NOTES:

1. After logging into the console, please check the

monitoring status of service components in

the "Cluster Status". If the service is not

ready, please wait patiently. You can start

to use when all components are ready.

2. Please modify the default password after login.

#####################################################

使用kubectl edit cm -n kubesphere-system ks-installer开启devops、notification、alerting后查看安装日志,显示部署minio超时。

TASK [common : Kubesphere | Deploy minio] **************************************

fatal: [localhost]: FAILED! => {"changed": true, "cmd": "/usr/local/bin/helm upgrade --install ks-minio /etc/kubesphere/minio-ha -f /etc/kubesphere/custom-values-minio.yaml --set fullnameOverride=minio --namespace kubesphere-system --wait --timeout 1800\n", "delta": "0:30:25.772021", "end": "2020-06-29 15:52:09.602253", "msg": "non-zero return code", "rc": 1, "start": "2020-06-29 15:21:43.830232", "stderr": "Error: timed out waiting for the condition", "stderr_lines": ["Error: timed out waiting for the condition"], "stdout": "Release \"ks-minio\" does not exist. Installing it now.", "stdout_lines": ["Release \"ks-minio\" does not exist. Installing it now."]}

...ignoring

TASK [common : debug] **********************************************************

ok: [localhost] => {

"msg": [

"1. check the storage configuration and storage server",

"2. make sure the DNS address in /etc/resolv.conf is available.",

"3. execute 'helm del --purge ks-minio && kubectl delete job -n kubesphere-system ks-minio-make-bucket-job'",

"4. Restart the installer pod in kubesphere-system namespace"

]

}

TASK [common : fail] ***********************************************************

fatal: [localhost]: FAILED! => {"changed": false, "msg": "It is suggested to refer to the above methods for troubleshooting problems ."}

PLAY RECAP *********************************************************************

localhost : ok=34 changed=22 unreachable=0 failed=1 skipped=74 rescued=0 ignored=5

按照返回消息执行helm del –purge ks-minio && kubectl delete job -n kubesphere-system ks-minio-make-bucket-job后依然安装失败。

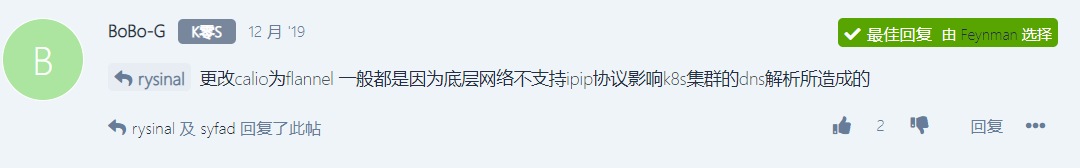

有看到说

但是没有找到地方可以修改kube_network_plugin: flannel。

@Feynman @Cauchy 救救孩子吧!